How many sprinters does it take to change a lightbulb, or what about important but not significant?

I often have discussions with non-methodologists about statistics and research methods. What can I say, it’s what I do. Statistics is one of those things that everyone has to learn, but most people feel they just don’t understand. So, instead of trying to understand it, they create hard-and-fast rules for themselves to get around it. It’s like chronic double-clickers; you know, that one person you know at work or in your family that double-clicks EVERYTHING, and doesn’t know how to right-click? Generally, it’s people who don’t understand, or aren’t comfortable with computers. They know that some things require double-clicks and it seems to work most of the time, so instead of figuring out that hyperlinks only need one click, they just double-click everything. And they use the menus for everything, including cutting and pasting, because they find CTRL-C and CTRL-V to be too advanced, and even after you show them several times that, “Hey, look how much faster it is to do it this way!” they say something like, “That’s too complicated for me,” but won’t relinquish the mouse or keyboard to you, and then still complain that, “It takes forever to do that on a computer.” And no, this is definitely not my dad. In any way whatsoever. In the research world, it’s similar–people who ONLY use ANOVAs, or rely on normality statistics to figure out if a distribution is normal (just graph it!) for instance…but I’m getting off topic.

There is the argument that statistics limit progress, or that the requirement for something to be statistically shown is restricting because there can be small, but important effects in small populations, therefore making it mathematically very difficult to “obtain” a p-value less than 0.05.

The example quoted to me recently was that it would impossible to prove that an intervention that makes sprinters run faster could be shown to do so because the relevant effect size is so small (less than one tenth of a second).

There are two way to look at this issue: The math way, and the big-picture way.

First, the math way:

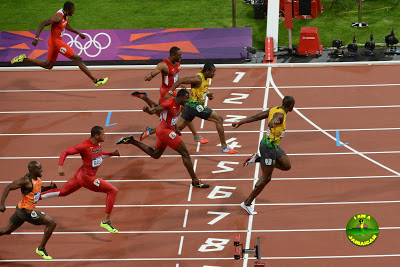

So, if you were to design a repeated-measures trial, and let’s say 0.1 seconds is the effect we’re looking for, we could use the top seven times at the London Olympics as pilot data to make our sample size calculation.

The mean 100m sprint time in the finals was 9.82 seconds, with a standard deviation of 0.12 seconds. If we were to improve that mean time by 0.1 seconds, with an alpha level of 0.05 and a power of 0.8, we would need 19 sprinters.

That’s not that many sprinters, when you think about it. Finding 19 all racing around that 9.8 second time however, could be an issue. But it’s not nearly as impossible as one would be lead to believe–from a numbers point of view.

But this brings me onto the more important part of this post which is the two bigger-picture perspectives on this issue:

1) Statistics are tools.

I’ll say that again: Statistics are tools. They do not define importance. They only define improbable. No amount of statistical significance can make something that isn’t relevant, important.

The converse of this, is also true: If something is truly important and relevant, no amount of statistical testing is going to negate that importance.

I’ll depart from the sprinting example here because I think the point is made more succinctly with a medical example. Prior to the 1920’s, type I diabetes was a fatal disease. In 1921, Banting and Best discovered insulin. They gave Leonard Thompson insulin and saved his life. N of 1. But a very important 1. In a world of a universally fatal disease, an effect of 1 is both relevant and important. This isn’t to say that there haven’t been other similar N’s of 1, but what’s more relevant is the completely consistent replicability of the result of Banting and Best. There has never been a randomized trial comparing placebo insulin with real insulin in the treatment of type I diabetes. No p-values. There’s actually no point in doing a statistical test on something that works 100% of the time. 1 is definitely different than 0.

2) It’s easy to get caught up in the details and forget about generalizability and context.

When we’re talking about elite sprinters at the Olympic level, where 0.1 seconds matters, we’ve essentially escaped the bounds of conventional study. The number of sprinters who have been recorded to break the 10-second barrier since 1968 is 83. At the 2012 Olympics, 7 sprinters had times less than 10 seconds. If you couldn’t break the 10-second barrier, 0.1 seconds was not a relevant number for you, because you would have needed a far greater (comparatively) time difference to win.

Therefore, while 0.1 seconds is really important for those sprinters who CAN break 10 seconds, it’s completely irrelevant for sprinters who CAN’T break 10 seconds. Any study that looks at improving sprint times by 0.1 seconds therefore, would only have been relevant for 7 people in the recorded world. Similarly, if we were to somehow find 19 sprinters do do this study (and they do exist), the results would only be generalizable to those sprinters who could break 10 seconds.

In reality, these athletes exist at the extremes of human limits. They are so unique in their ability, that it’s probably harder NOT to mess them up than it is to simply nurture their in-born traits. Studying them to make generalizable statements is ridiculous. To study 10-second breakers, you wouldn’t need to sample the population, because there are so few of them that you could just study the population itself, which then renders the entire point of statistics (which is ability to infer conclusions based on a SAMPLE and apply them to the larger population) moot. No statistician in the world would ever say that a study in which the entire applicable population was studied was poor due to a lack of inferential statistics or a p-value.

However, you are not at the extreme of human limits. Most of us are not. Apologizes if Usain Bolt is reading this. The context IS important. For the effects of interventions that are relevant to mere mortals, most studies ARE feasible. They DO require sampling, and thus, by necessity, statistics. An important effect in a relevant population is only limited by the imagination and knowledge of the researcher who studies it. Dismissal of the larger picture is only for those who lack either the knowledge, experience or the will to see the question through to its fruition.