HIIT vs Steady State–Who will win??

I’m no “real world” trainer, but if there’s anything that I am, it’s a “real world” researcher. And if there’s anything I can say that I do well, it’s research reviews. This review was a very exciting thing for me to do because this is a trial that has “real world” training implications and hopefully, you will see that not everything is crystal clear in an abstract. It also enabled me to demonstrate a few examples of how alternate analyses can be useful as well as the importance of succinct data reporting.

The thesis (not even published yet in the journals!) by Ethlyn Gail Trapp from the University of New South Wales in Australia has already made massive ripples in the virtual fitness community–at least the ones that I pay attention to. For those of you just hearing about it here, here’s the abstract from Ms. Trapp’s thesis:

“Study 2, a 15 week training study, was a randomised controlled trial comparing the effects of short bout HIIE and steady state (SS) exercise on fat loss. Forty-five women were randomly assigned to one of three groups: HIEE, SS or control.

Preliminary and posttraining testing included a DEXA scan and a VO2peak test including blood collection. All participants completed 3-d diet diaries and maintained their current diet for the course of the study. Participants exercised three times a week for the next 15 weeks under supervision. The HIIE group did 20 min of HIIE (8 s work: 12 s rest) at a workload determined from the VO2peak test. The SS group cycled at 60% VO2peak, building to a maximum of 40 min exercise.

Both groups increased VO2peak. The HIIE group had a significant loss of total body mass (TBM) and fat mass (FM) when compared to the other groups. There was no change in dietary intake. There have been a number of studies examining the acute effects of HIIE but, to our knowledge, this is the first study examining the chronic effects of this particular exercise protocol”

Of all the things I will review in this critique, one thing I won’t really get at directly is this mysterious, “How did the Steady State group _gain_ weight?” You will see as we go along that using a mean is fraught with potential for misinterpretation, and while I won’t be able to explain why some individuals gained weight during this study, I will definitely be able to comment on some common misconceptions when interpreting study results.

One of the nice things about a thesis, is that with small sample sizes, the raw data is actually published in an Appendix, so you will see a wee bit of re-analysis on my part, which will hopefully shed some light on things a bit better. This of course, will just spawn more controversy, but if I ran from any controversy…well, let’s just say I’d be in a heckuva lot better shape than I am now. I will tell you that I did not treat this thesis any differently than I would have had I been reviewing for a peer-reviewed journal, and that I have done re-analyses before.

Before I start into the review though, I will also put in a plug for any researchers out there for the CONSORT statement (the link is to the right), which is a consensus statement by a schwak of very smart people on how randomized controlled trials should be reported, and makes for a nice checklist if you haven’t had a lot of experience reading these kinds of studies. The assessment of the quality of these items however, comes with experience and education (and occasionally, blood, sweat, tears and the promise of your first-born if only you could understand what the stats prof was trying to say.)

The gist of this study is as follows [with my critique in square parentheses]:

Forty-five females aged 18-30, who were non-smokers, non-execising, but were otherwise healthy were recruited for this study. They were recruited by posters around the University of New South Wales. The were screened with a PAR-Q (traditional screening tool for exercise clearance) and then weighed, scanned, and generally oriented to their program. Before any testing, each woman was assigned randomly (through drawing names from a hat) to one of three groups: The High Intensity Interval Training group (HIIE), the Steady State group (SS) or the control group. Fifty one volunteers were initially tested (and thus already assigned to a group), but 6 of these women withdrew from the study before starting their training program.

[So before I’ve read further into this study, I see at least three problems here. 1) drawing randomly from a hat is not generally a scientific randomization method. You run into problems with reproducability (if we were to suspect that the subjects weren’t randomly put into groups, the researchers would have no way to prove or disprove it because it’s impossible to replicate the hat draw.) 2) Fifty one women were initially assigned to one of the three groups, but only 45 women stayed in the study before they started any training at all. But there’s no comment about these 6 women, and why they decided to drop out. Did some of them drop out because they got HIIE and were concerned it would be too hard? Or did some of them drop out because they got put into the control group and were disappointed that they would have to not exercise for 15 weeks? There’s no way to tell, because this information isn’t published. 3) We also have no idea how many women were excluded from the trial before any testing was done and the reasons why they were excluded. So we’re left in the dark as to whether there was any selection bias on the part of the researchers. And 4) we don’t really have a good idea of why the sample size was so small. The author mentions in her overview that they were looking for a large effect size of 0.9, but 0.9 what? Kilograms? Proportion of people who lost weight? So the sample size of 15 per group is left relatively unjustified because there is vital information missing. This is not necessarily a problem, as I may discuss in a later article.]

The subjects were tested to determine their VO2max. All the women were tested during the follicular stage of their menstrual cycle, and all the women ate a 60-70% carbohydrate meal the night before testing. Baseline measurements were taken at this time: mass, height, resting blood pressure, resting heart rate, resting lactate, glucose, and blood lipid profiles, as well as a complete blood count (CBC). Unfortunately, since the subjects were untrained, they often couldn’t ramp up enough during the testing to achieve a true VO2max. So, the maximum workload at which they stopped cycling was used as their measure of aerobic power and is referred to as their VO2peak.

[This is an important distinction to make, though again, it may not be a critical problem. VO2max is the gold standard for measuring aerobic capacity. However, there are criteria that need to be met in order for a VO2max to be considered a valid one. In this case, we are not working with a true VO2max, but rather the next-best thing, since most untrained individuals can’t work hard enough to achieve their VO2max.]

Each subject also underwent DEXA testing to measure body fat, lean tissue mass and bone mineral content as well as bone mineral density. DEXA is a validated radiological method of measuring body fat.

Subjects also did 3-day diet records (2 weekdays, 1 weekend day) before training began, at intermittent times during training and at the end of the training period. While there are limitations to diet logs, I’ll perhaps discuss this in a later article as well.

Subjects in the HIIE group did 3 workouts per week. Each workout was supervised by one of the research team (i.e. this was not a home program). Each workout consisted of a 5 minute warm-up on the cycle ergometer, followed by intervals of 8 seconds of all-out cycling, followed by 12 seconds of relative rest (just working hard enough to keep the wheel spinning). Subjects started with 5 minute work periods (i.e. 5 minutes warm up, 5 minutes intervals, 5 minute cool-down) and gradually increased to 20 minute interval work periods. Most women managed to ramp up to 20 minutes in the first 2 weeks of the training program (a 15-week program in total). If subjects missed a workout, they made it up so that 45 session would be done in as close to 15 weeks as possible. Some subjects did do home activity, but only if it was a holiday. As the women got used to their workload of 0.5 kp, they were encouraged to increase their workload in increments of 0.5 kp (Think of kp as how tightly the resistance knob is turned).

Subjects in the SS group also did 3 workouts per week. Each workout was also supervised, except in the case of holidays. Each workout consisted of a 5 minute warm-up, followed by a steady state period, and then finishing with a 5 minute cool-down. The workload for each steady-state period was set at a level such that each woman achieved the same heart rate that corresponded to 60% of the VO2peak. The duration of this training started at 10-20 minutes, and advanced to 40 minutes. All subjects pedaled at 60 rpms during the steady-state period. Once subjects could cycle for 40 minutes in the steady-state period, they were encouraged to increase their workload by 0.5 kp to keep their rpms at 60 for 40 minutes (since with training, they would eventually have the ability to hold 40 minutes of steady-state pedalling at higher than 60 rpms).

The control group did not have any supervised training sessions and were asked to maintain their current physical activity level and dietary habits for 15 weeks. They also had intermitted food logs to keep and submit.

Stats (for the geeks):

The authors used repeated-measures ANOVAs to test the differences between the two training protocols and control group. They used post-hoc testing if an ANOVA yielded a significant p-value (pResults:

On the whole, the three groups were comparable with respect to all their baseline characteristics. The author did do significance tests to “confirm” that no statistical differences existed, but this is generally considered inappropriate testing (I’ll probably write a small article on this in the future). The primary outcome of this study seemed to be body composition changes. This means that all other analyses can be generally considered secondary and exploratory, since after a few significance tests, you drastically increase the chances that you will detect a statistically significant difference purely by chance, when one does not actually exist in the overall population (otherwise known as a type I error). This is fortunate for us, because fat loss it the variable we’re most interested in. In terms of drop-outs, 4 people dropped out of the HIIE group, and 7 people dropped out of the SS group. We are not told why they dropped out, but are told that no differences exist between the drop-outs and the people that stayed in. This data is not provided.

[So why do I bother mentioning this? Again, we are on the hunt for bias in the study. Did these people drop out because they weren’t seeing results? Because they died? Because they sustained an injury? Because they just didn’t feel like exercising anymore? Because they just couldn’t handle losing weight? We just don’t know. What makes this important is that ultimately, the analysis was not what we call an “intention-to-treat” analysis. That is, not all of the people who were randomized were analysed. The potential for bias is therefore not ascertainable, and may very well be present. This is perhaps the biggest flaw of this study, though the effects of the flaw are somewhat unknown.This is also particularly salient because we are not looking at small drop-out numbers. 4/15 in the HIIE group and 7/15 in the SS group corresponds to almost a third, and almost one half of the subjects in each group. If this was a drug study and we found that drug A totally outperformed drug B, but that half the people dropped out of the study because they DIED (or experienced significant side effects), I don’t think either drug would be going to market any time soon. Not that I think anyone died in this study, but that’s not the point…]

On to the main outcomes: Total body mass, and fat mass.

In terms of total body mass, the researchers failed to find a significant difference between the SS and HIIE group (the phrases, “failed to find a significant difference” meaning something very different than “there was no difference”, which I may follow-up on in a later article.) The SS group lost an average of 0.11 kg (the variance was reported at 0.41 kg, but whether this is a standard deviation or a standard error isn’t clear–and the two things are distinctly different from one another). The HIIE group lost an average of 1.51 kg (the variance reported as 0.95 kg, again, standard deviation vs. standard error not known.)

[When you think about it though, 15 weeks of training to lose, on average, 1.51 kg, or 3.3 lbs vs. 0.11 kg or 0.24 lbs…how meaningful is this either way? Even if the author had found a statistically significant difference, I’m not sure I would care.]

In terms of fat loss, they did find a statistically significant difference between the two training groups. The HIIE group lost, on average 2.5 kg (standard deviation 0.8 kg); and the SS group GAINED, on average 0.4 kg (standard deviation 0.9 kg.) And I know it’s a standard deviation, because I recalculated this stuff with the raw data in this thesis.

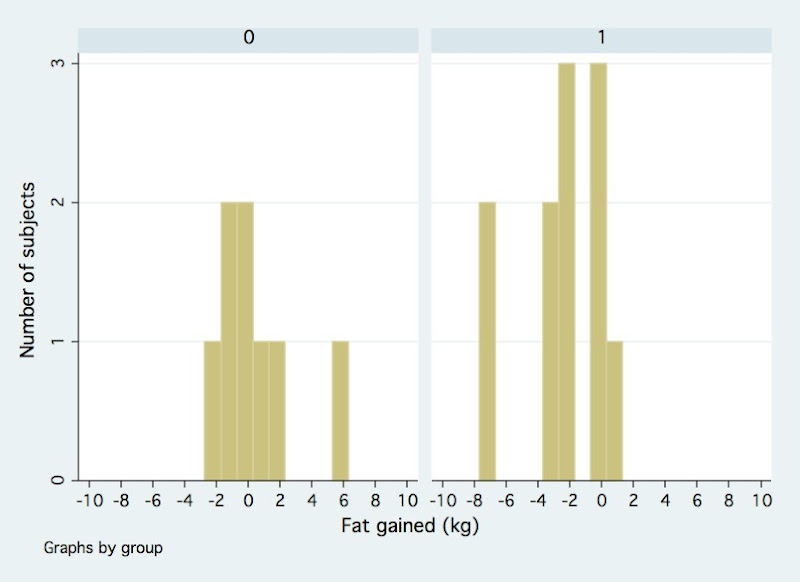

If we look at how individuals did on both training protocols you get this sort of picture:

Where group 0 is the SS group and the group 1 is the HIIE group. The Y-axis is the number of people who lost or gained fat over the 15 weeks. And the X-axis is the number of kilograms of fat lost or gained over 15 weeks. Negative numbers mean fat was lost. As you can see the poor SS group has this one outlier who gained 5.9 kg! And the fortunate HIIE group had not one, but two outliers who lost about 7.7 kg each! In a such a small sample size, these three individuals would have pulled the averages (means) in their respective groups outwards in their respective direction, and may have made it seem like there was a larger difference than there might have been if they hadn’t been in the study. It certainly does make a strong argument for why there seemed to be an average gain of 0.4 kg in the SS group. But, we can’t boot them from the analysis just because they performed the way they did, so we have to get at precision in another way.

Another useful tool in looking at how precise this difference is, is to look at the 95% confidence interval for the measured difference. A confidence interval gives us an idea of how precise the measurements are, in the context of repeated experiments. In the case of a 95% confidence interval, it tell us that if we repeated this experiment 100 times, each time with different subjects picked at random from the same available population, that the measured difference will fall within the interval 95 times out of 100. This is an important interval because if the interval includes 0, or includes a number close to 0, we have less faith in the precision (i.e. how close the estimated difference measured in the study is to the ACTUAL difference in the overall target population).

For the geeks, the pooled standard deviation of the sample was 2.64. The pooled standard error was 1.22. The 95% confidence interval of the difference between the two groups (which was 2.5 kg- (-0.4 kg) = 2.9 kg ) is 0.5 to 5.3. The way we interpret this is that if we were to do this trial again and again, we would expect the difference between the two groups with respect to fat loss to be anywhere from 0.5 kg to 5.3 kg on average, 95% of the time (that’s 1.1 lbs to 11.6 lbs, for you non-metric folks.) On the whole, that’s a pretty wide range. Don’t confuse this with actual fat loss. This confidence interval describes the DIFFERENCE between the two groups, not the actual loss in each group. So, if we were to do this trial again with different people who met the inclusion/exclusion criteria, we could see anything from the HIIE group losing 0.5 kg more fat than the SS group, to the HIIE group losing 5.3 kg more fat than the SS group.

There were other outcomes measured and discussed in this study, but I’m not going to go into them because fat loss is what we’re really interested in here.

The Bottom Line:

On the whole, I would say this is definitely underwhelming evidence that High Intensity Interval Exercise is superior to Steady State exercise for fat loss. There are several methodological concerns with respect to the randomization method, the lack of description of blinding (we actually have no idea who was blinded to what–particularly whether evaluators were blinded or not, since it’s self-evident that the subjects weren’t blinded) and the main draw-back being the lack of an intention-to-treat analysis. It really is a bit of case of a GREAT trial idea, with sub-optimal trial design and execution (and analysis!). However, the study certainly does build a minor case for HIIT or HIIE. The unfortunate part of all of this is that despite all of the potential places that bias could have occurred, and the HIIE group doing statistically better, the HIIE group STILL only lost, on average 2.5 kg of fat over FIFTEEN weeks. That’s 5.5 lbs of fat lost in almost four months. A little over a pound per month–far less than half a pound per week of fat lost. And that’s an average number with the help of two women losing almost 8 kg of fat! It’s not exactly the dramatic transformation we all hope for after 4 months of gut-wrenching interval work.

So, we’re still left somewhat in the dark with this debate. It’s unfortunate that such a great opportunity for a great study was lost, but there’s still room for improvement!